Global Issues

Justice or Neural Surveillance? The Human Rights Crisis in the Age of Neurotechnology -By Fransiscus Nanga Roka

As throughout history, the struggle for human rights concentrated mainly on keeping a person’s body from being abused and safeguarding his voice against violence. The next step may be safeguarding people naturally protect their minds.

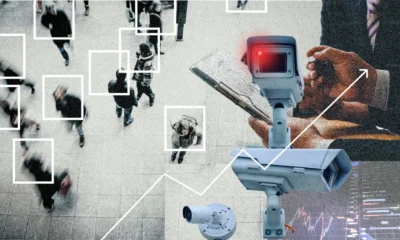

At any point in the history of criminal justice, every technological innovation has promised a more perfect search for the truth. Fingerprints transformed policing in the nineteenth century. DNA evidence revolutionized forensic science in the twentieth century. Today sees a new frontier emerging the human brain itself. Neurotechnology tools which can measure neural activity, or interpret it have begun to enter discussions relating to evidence, interrogation, and prediction in criminal justice systems. What was once strictly within the realm of science fiction is quickly evolving as a legal reality. And it brings the question: when courts begin peering into human minds, where is justice being served and where does the surveillance begin? Neurotechnology refers to machines and systems that are capable of monitoring or analyzing patterns of neural activity. These include electroencephalography (EEG) and functional magnetic resonance imaging (fMRI) as well as brain computer interfaces (BCIs) along with a host of other emerging tools for recognizing patterns associated with memory deadlock recognition emotions deception, etc. At present there are “brain fingerprinting” techniques being tested in laboratories which purport to be able to say with some certainty whether somebody recognizes certain pieces of information. Other experiments seek signs of neural activity in the brain that are associated with lying or intent.In principle, these tools offer unheard of accuracy. Imagine a courtroom where investigators can verify whether a suspect recalls the scene of a crime, where witness statements may be compared with neural responses, or where courts can evaluate criminal responsibility by patterns of brain activity. For some, neurotechnology is the next era of evidence based justice a phase in which human perception and memory are supported by science.

If this hope proves elusive, the result could be far worse.

The human brain, unlike a fingerprint or DNA sample, is not just a unique identifier. It is the carrier of thoughts, memories and emotions as well as even intentions. Access to neural data equates with approaching mankind’s most intimate territory: the mind itself. If governments or judicial authorities develop techniques for scanning brainwaves as evidence, it could be a totally new era in human rights.

At the heart of the debate is the idea of mental privacy that is, whether people should have a basic right to shield their thoughts from outside snooping. Although an international bill of human rights shields privacy, freedom of thought and freedom from self incrimination, those items were conceived in the pre digital age. The current legal frameworks were not designed for a time when state agents might interpret neural signals as legal evidence.

This lacuna points to a potentially hazardous legal gap.

One of the first things at risk perhaps could be the right to remain silent. In many legal systems, an accused person cannot be forced to incriminate herself. But if authorities do not ask a suspect any questions, rather than take a brain scan of that person in the future? If such a scan clearly shows the suspect recognizing damning evidence, is it testimony or simply material evidence?

The answer is far from clear. Courts traditionally have treated physical evidence and statements differently. Usually without consent, DNA samples fingerprints and blood tests are admitted as evidence. If neural data were treated in this way, governments might argue that brain scans are just another form of forensic tool.

For despite the superficial similarities between brain and computer One processes biological information; the other processes signals that may or may not have been intended by some other agent than ourselves Allow the State such information and not only does its cope sharply alter but the citizens also find themselves confronted with a justice system that is completely different in character Peru will change the brain from a private mental space into the potential scene Ultimately the question of major risk lies not in how accurate neurotechnology it is itself The human brain is extraordinarily complex. Interpreting neural signals remains scientifically uncertain Human brain activity varies widely between individuals and contexts. False positives and misinterpretations are possible The technology is likewise vulnerable to overconfidence and misuse After decades of wide use with dubious scientific basis courts eventually rule in many jurisdictions that polygraph machines fail to meet rigorous evidentiary standards They may be on a similar course with pos sibly greater consequences The risk is not only scientific but also political and ethical In authoritarian settings, neurotechnology can easily become a tool of oppression Governments might use brain-based methods to investigate the minds of suspects, assess their ideological loy alty, and monitor for signs of dissent In democratic societies too, the power ful investigative technologies and only be difficult to resist-particularly when factors such as terrorism or national security enter into play Neurotechnology, if there are no solid safeguards, may gradually insti tute an entire new category of cognitive surveil lance to monitor the thoughts themselves of human beings The emerging field of “neurorights” is try ing to meet this threat Scholars and policymakers have begun to suggest new human rights provisions designed specifically in the age of neurotechnology They include the right to mental privacy, the right to cognitive liberty, and safeguards against any manipulation or extrac tion of neural data without consent.

That However, this is enthusiasm. There are some countries that have already taken steps along these lines. Chile, for example, has introduced provisions in its constitution specifically related to neurotechnology. International agencies also see the importance of coming up with some ethical principles to guide the governance of neural data. And yet such initiatives at present largely remain a patchwork, with glaring gaps here and there.

What’s needed is an internationally coherent framework designed to bear in mind the special risks that neurotechnology poses to the justice system. Such framework must be guided by clear principles: neural data is highly sensitive information; forced brain interrogation is forbidden; and courts accept neurotech-based evidence only if it meets rigorous scientific standards.

The challenge the future poses us is not, however, to halt technological development. It is Science itself that will continue to expand the realm of what is achievable. The real challenge will be ensuring that novelty does not come too quickly for us then legal and moral safeguards are unable to keep up and protect human dignity

As throughout history, the struggle for human rights concentrated mainly on keeping a person’s body from being abused and safeguarding his voice against violence. The next step may be safeguarding people naturally protect their minds.

Unless the law acts now, the risk is clear. Justice systems set up to find facts may change into means capable of probing into that last citadel, the human mind.

But when once that frontier has been breached, it may be impossible to rebuild it.